Fast Autocomplete Search for Your Website

Every website deserves a great search engine - but building a search engine can be a lot of work, and hosting it can quickly get expensive.

I’m going to build a search engine for 24 ways that’s fast enough to support autocomplete (a.k.a. typeahead) search queries and can be hosted for free. I’ll be using wget, Python, SQLite, Jupyter, sqlite-utils and my open source Datasette tool to build the API backend, and a few dozen lines of modern vanilla JavaScript to build the interface.

Try it out here, then read on to see how I built it.

First step: crawling the data

The first step in building a search engine is to grab a copy of the data that you plan to make searchable.

There are plenty of potential ways to do this: you might be able to pull it directly from a database, or extract it using an API. If you don’t have access to the raw data, you can imitate Google and write a crawler to extract the data that you need.

I’m going to do exactly that against 24 ways: I’ll build a simple crawler using wget, a command-line tool that features a powerful “recursive” mode that’s ideal for scraping websites.

We’ll start at the https://24ways.org/archives/ page, which links to an archived index for every year that 24 ways has been running.

Then we’ll tell wget to recursively crawl the website, using the --recursive flag.

We don’t want to fetch every single page on the site - we’re only interested in the actual articles. Luckily, 24 ways has nicely designed URLs, so we can tell wget that we only care about pages that start with one of the years it has been running, using the -I argument like this: -I /2005,/2006,/2007,/2008,/2009,/2010,/2011,/2012,/2013,/2014,/2015,/2016,/2017

We want to be polite, so let’s wait for 2 seconds between each request rather than hammering the site as fast as we can: --wait 2

The first time I ran this, I accidentally downloaded the comments pages as well. We don’t want those, so let’s exclude them from the crawl using -X "/*/*/comments".

Finally, it’s useful to be able to run the command multiple times without downloading pages that we have already fetched. We can use the --no-clobber option for this.

Tie all of those options together and we get this command:

wget --recursive --wait 2 --no-clobber

-I /2005,/2006,/2007,/2008,/2009,/2010,/2011,/2012,/2013,/2014,/2015,/2016,/2017

-X "/*/*/comments"

https://24ways.org/archives/ If you leave this running for a few minutes, you’ll end up with a folder structure something like this:

$ find 24ways.org

24ways.org

24ways.org/2013

24ways.org/2013/why-bother-with-accessibility

24ways.org/2013/why-bother-with-accessibility/index.html

24ways.org/2013/levelling-up

24ways.org/2013/levelling-up/index.html

24ways.org/2013/project-hubs

24ways.org/2013/project-hubs/index.html

24ways.org/2013/credits-and-recognition

24ways.org/2013/credits-and-recognition/index.html

...As a quick sanity check, let’s count the number of HTML pages we have retrieved:

$ find 24ways.org | grep index.html | wc -l

328There’s one last step! We got everything up to 2017, but we need to fetch the articles for 2018 (so far) as well. They aren’t linked in the /archives/ yet so we need to point our crawler at the site’s front page instead:

wget --recursive --wait 2 --no-clobber

-I /2018

-X "/*/*/comments"

https://24ways.org/Thanks to --no-clobber, this is safe to run every day in December to pick up any new content.

We now have a folder on our computer containing an HTML file for every article that has ever been published on the site! Let’s use them to build ourselves a search index.

Building a search index using SQLite

There are many tools out there that can be used to build a search engine. You can use an open-source search server like Elasticsearch or Solr, a hosted option like Algolia or Amazon CloudSearch or you can tap into the built-in search features of relational databases like MySQL or PostgreSQL.

I’m going to use something that’s less commonly used for web applications but makes for a powerful and extremely inexpensive alternative: SQLite.

SQLite is the world’s most widely deployed database, even though many people have never even heard of it. That’s because it’s designed to be used as an embedded database: it’s commonly used by native mobile applications and even runs as part of the default set of apps on the Apple Watch!

SQLite has one major limitation: unlike databases like MySQL and PostgreSQL, it isn’t really designed to handle large numbers of concurrent writes. For this reason, most people avoid it for building web applications.

This doesn’t matter nearly so much if you are building a search engine for infrequently updated content - say one for a site that only publishes new content on 24 days every year.

It turns out SQLite has very powerful full-text search functionality built into the core database - the FTS5 extension.

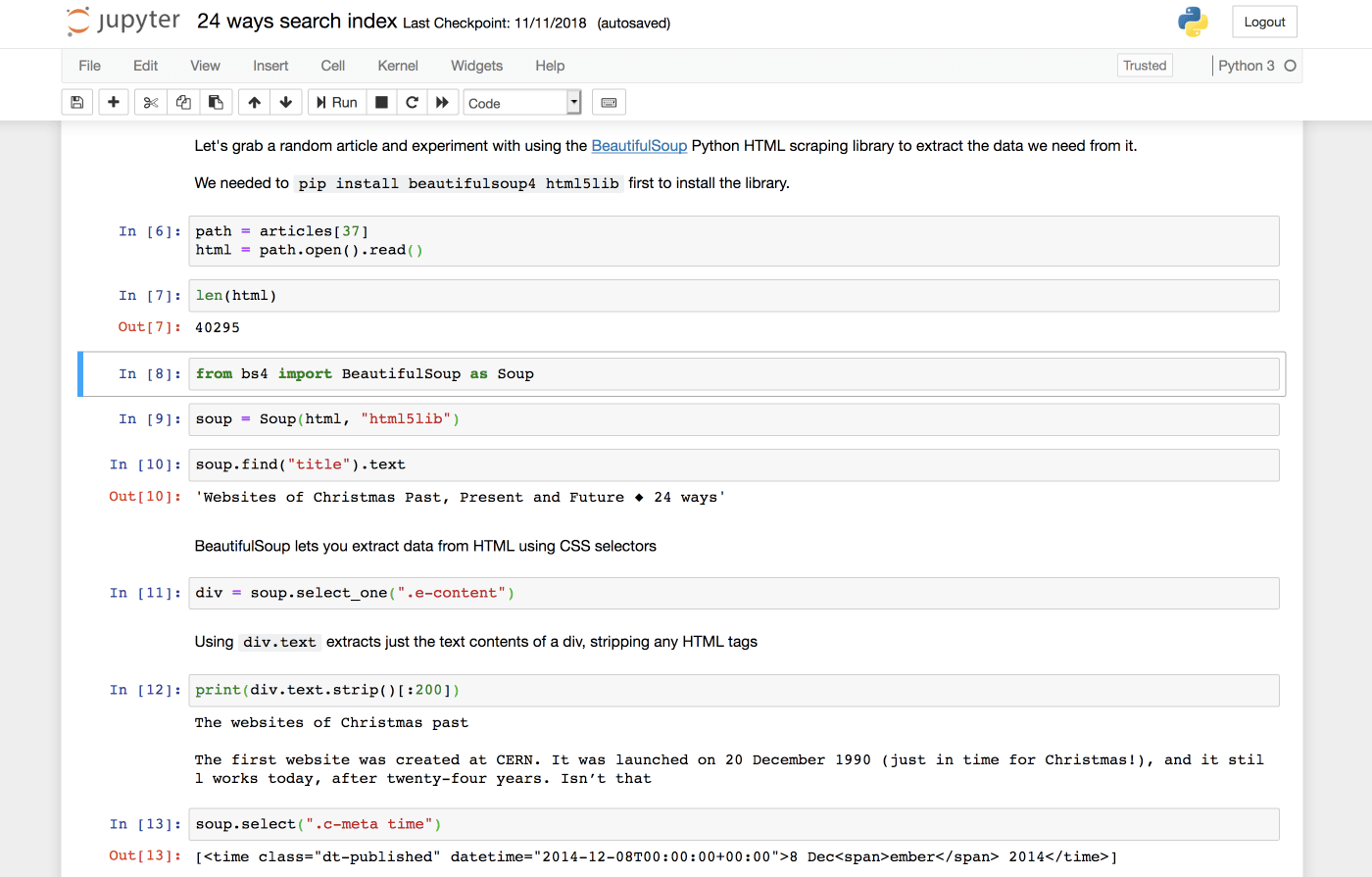

I’ve been doing a lot of work with SQLite recently, and as part of that, I’ve been building a Python utility library to make building new SQLite databases as easy as possible, called sqlite-utils. It’s designed to be used within a Jupyter notebook - an enormously productive way of interacting with Python code that’s similar to the Observable notebooks Natalie described on 24 ways yesterday.

If you haven’t used Jupyter before, here’s the fastest way to get up and running with it - assuming you have Python 3 installed on your machine. We can use a Python virtual environment to ensure the software we are installing doesn’t clash with any other installed packages:

$ python3 -m venv ./jupyter-venv

$ ./jupyter-venv/bin/pip install jupyter

# ... lots of installer output

# Now lets install some extra packages we will need later

$ ./jupyter-venv/bin/pip install beautifulsoup4 sqlite-utils html5lib

# And start the notebook web application

$ ./jupyter-venv/bin/jupyter-notebook

# This will open your browser to Jupyter at http://localhost:8888/You should now be in the Jupyter web application. Click New -> Python 3 to start a new notebook.

A neat thing about Jupyter notebooks is that if you publish them to GitHub (either in a regular repository or as a Gist), it will render them as HTML. This makes them a very powerful way to share annotated code. I’ve published the notebook I used to build the search index on my GitHub account.

Here’s the Python code I used to scrape the relevant data from the downloaded HTML files. Check out the notebook for a line-by-line explanation of what’s going on.

from pathlib import Path

from bs4 import BeautifulSoup as Soup

base = Path("/Users/simonw/Dropbox/Development/24ways-search")

articles = list(base.glob("*/*/*/*.html"))

# articles is now a list of paths that look like this:

# PosixPath('...24ways-search/24ways.org/2013/why-bother-with-accessibility/index.html')

docs = []

for path in articles:

year = str(path.relative_to(base)).split("/")[1]

url = 'https://' + str(path.relative_to(base).parent) + '/'

soup = Soup(path.open().read(), "html5lib")

author = soup.select_one(".c-continue")["title"].split(

"More information about"

)[1].strip()

author_slug = soup.select_one(".c-continue")["href"].split(

"/authors/"

)[1].split("/")[0]

published = soup.select_one(".c-meta time")["datetime"]

contents = soup.select_one(".e-content").text.strip()

title = soup.find("title").text.split(" ◆")[0]

try:

topic = soup.select_one(

'.c-meta a[href^="/topics/"]'

)["href"].split("/topics/")[1].split("/")[0]

except TypeError:

topic = None

docs.append({

"title": title,

"contents": contents,

"year": year,

"author": author,

"author_slug": author_slug,

"published": published,

"url": url,

"topic": topic,

})After running this code, I have a list of Python dictionaries representing each of the documents that I want to add to the index. The list looks something like this:

[

{

"title": "Why Bother with Accessibility?",

"contents": "Web accessibility (known in other fields as inclus...",

"year": "2013",

"author": "Laura Kalbag",

"author_slug": "laurakalbag",

"published": "2013-12-10T00:00:00+00:00",

"url": "https://24ways.org/2013/why-bother-with-accessibility/",

"topic": "design"

},

{

"title": "Levelling Up",

"contents": "Hello, 24 ways. Iu2019m Ashley and I sell property ins...",

"year": "2013",

"author": "Ashley Baxter",

"author_slug": "ashleybaxter",

"published": "2013-12-06T00:00:00+00:00",

"url": "https://24ways.org/2013/levelling-up/",

"topic": "business"

},

...My sqlite-utils library has the ability to take a list of objects like this and automatically create a SQLite database table with the right schema to store the data. Here’s how to do that using this list of dictionaries.

import sqlite_utils

db = sqlite_utils.Database("/tmp/24ways.db")

db["articles"].insert_all(docs)That’s all there is to it! The library will create a new database and add a table to it called articles with the necessary columns, then insert all of the documents into that table.

(I put the database in /tmp/ for the moment - you can move it to a more sensible location later on.)

You can inspect the table using the sqlite3 command-line utility (which comes with OS X) like this:

$ sqlite3 /tmp/24ways.db

sqlite> .headers on

sqlite> .mode column

sqlite> select title, author, year from articles;

title author year

------------------------------ ------------ ----------

Why Bother with Accessibility? Laura Kalbag 2013

Levelling Up Ashley Baxte 2013

Project Hubs: A Home Base for Brad Frost 2013

Credits and Recognition Geri Coady 2013

Managing a Mind Christopher 2013

Run Ragged Mark Boulton 2013

Get Started With GitHub Pages Anna Debenha 2013

Coding Towards Accessibility Charlie Perr 2013

...

<Ctrl+D to quit>There’s one last step to take in our notebook. We know we want to use SQLite’s full-text search feature, and sqlite-utils has a simple convenience method for enabling it for a specified set of columns in a table. We want to be able to search by the title, author and contents fields, so we call the enable_fts() method like this:

db["articles"].enable_fts(["title", "author", "contents"])Introducing Datasette

Datasette is the open-source tool I’ve been building that makes it easy to both explore SQLite databases and publish them to the internet.

We’ve been exploring our new SQLite database using the sqlite3 command-line tool. Wouldn’t it be nice if we could use a more human-friendly interface for that?

If you don’t want to install Datasette right now, you can visit https://search-24ways.herokuapp.com/ to try it out against the 24 ways search index data. I’ll show you how to deploy Datasette to Heroku like this later in the article.

If you want to install Datasette locally, you can reuse the virtual environment we created to play with Jupyter:

./jupyter-venv/bin/pip install datasetteThis will install Datasette in the ./jupyter-venv/bin/ folder. You can also install it system-wide using regular pip install datasette.

Now you can run Datasette against the 24ways.db file we created earlier like so:

./jupyter-venv/bin/datasette /tmp/24ways.dbThis will start a local webserver running. Visit http://localhost:8001/ to start interacting with the Datasette web application.

If you want to try out Datasette without creating your own 24ways.db file you can download the one I created directly from https://search-24ways.herokuapp.com/24ways-ae60295.db

Publishing the database to the internet

One of the goals of the Datasette project is to make deploying data-backed APIs to the internet as easy as possible. Datasette has a built-in command for this, datasette publish. If you have an account with Heroku or Zeit Now, you can deploy a database to the internet with a single command. Here’s how I deployed https://search-24ways.herokuapp.com/ (running on Heroku’s free tier) using datasette publish:

$ ./jupyter-venv/bin/datasette publish heroku /tmp/24ways.db --name search-24ways

-----> Python app detected

-----> Installing requirements with pip

-----> Running post-compile hook

-----> Discovering process types

Procfile declares types -> web

-----> Compressing...

Done: 47.1M

-----> Launching...

Released v8

https://search-24ways.herokuapp.com/ deployed to HerokuIf you try this out, you’ll need to pick a different --name, since I’ve already taken search-24ways.

You can run this command as many times as you like to deploy updated versions of the underlying database.

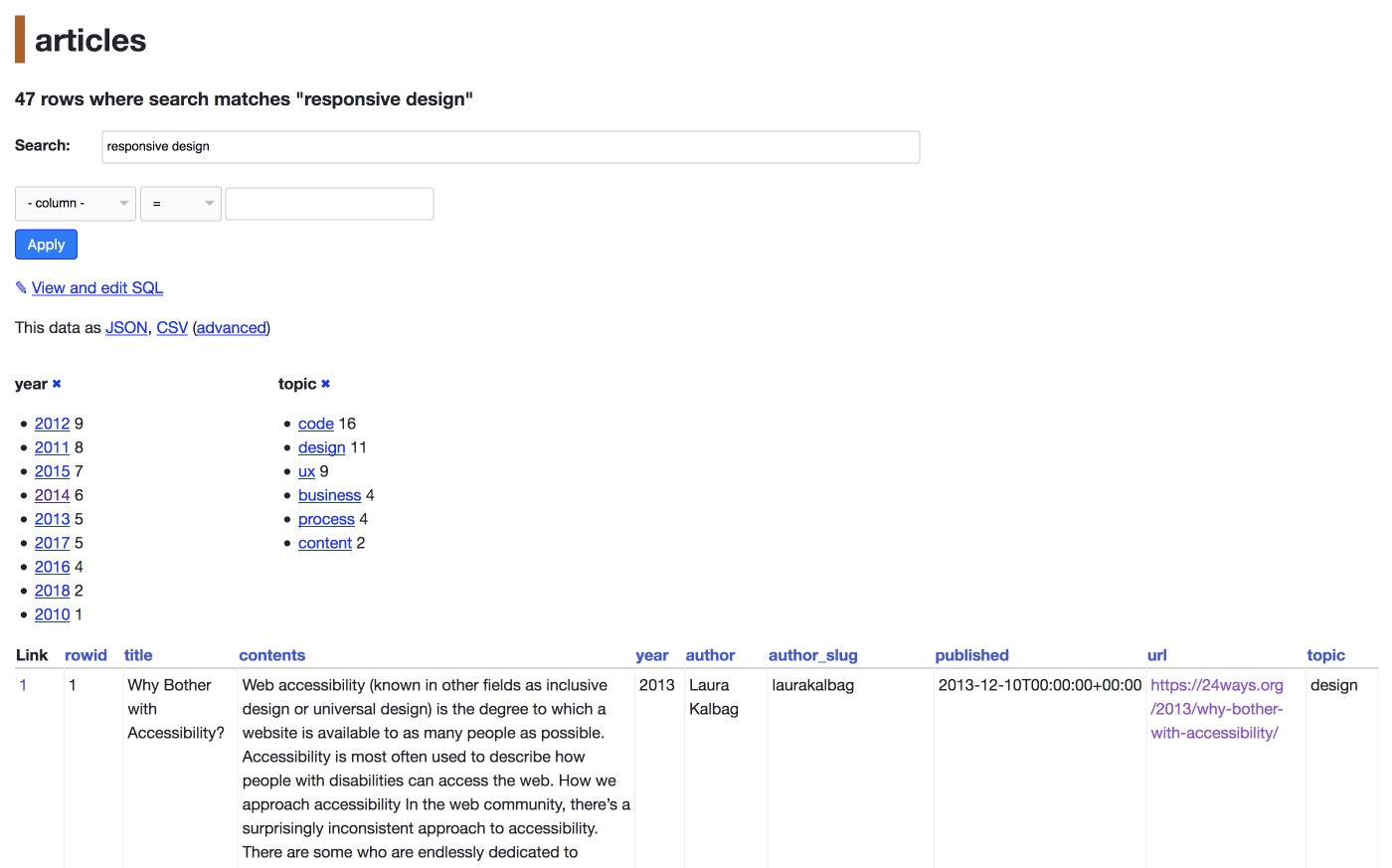

Searching and faceting

Datasette can detect tables with SQLite full-text search configured, and will add a search box directly to the page. Take a look at http://search-24ways.herokuapp.com/24ways-b607e21/articles to see this in action.

SQLite search supports wildcards, so if you want autocomplete-style search where you don’t need to enter full words to start getting results you can add a * to the end of your search term. Here’s a search for access* which returns articles on accessibility:

http://search-24ways.herokuapp.com/24ways-ae60295/articles?_search=acces%2A

A neat feature of Datasette is the ability to calculate facets against your data. Here’s a page showing search results for svg with facet counts calculated against both the year and the topic columns:

http://search-24ways.herokuapp.com/24ways-ae60295/articles?_search=svg&_facet=year&_facet=topic

Every page visible via Datasette has a corresponding JSON API, which can be accessed using the JSON link on the page - or by adding a .json extension to the URL:

http://search-24ways.herokuapp.com/24ways-ae60295/articles.json?_search=acces%2A

Better search using custom SQL

The search results we get back from ../articles?_search=svg are OK, but the order they are returned in is not ideal - they’re actually being returned in the order they were inserted into the database! You can see why this is happening by clicking the View and edit SQL link on that search results page.

This exposes the underlying SQL query, which looks like this:

select rowid, * from articles where rowid in (

select rowid from articles_fts where articles_fts match :search

) order by rowid limit 101We can do better than this by constructing a custom SQL query. Here’s the query we will use instead:

select

snippet(articles_fts, -1, 'b4de2a49c8', '8c94a2ed4b', '...', 100) as snippet,

articles_fts.rank, articles.title, articles.url, articles.author, articles.year

from articles

join articles_fts on articles.rowid = articles_fts.rowid

where articles_fts match :search || "*"

order by rank limit 10;You can try this query out directly - since Datasette opens the underling SQLite database in read-only mode and enforces a one second time limit on queries, it’s safe to allow users to provide arbitrary SQL select queries for Datasette to execute.

There’s a lot going on here! Let’s break the SQL down line-by-line:

select

snippet(articles_fts, -1, 'b4de2a49c8', '8c94a2ed4b', '...', 100) as snippet,We’re using snippet(), a built-in SQLite function, to generate a snippet highlighting the words that matched the query. We use two unique strings that I made up to mark the beginning and end of each match - you’ll see why in the JavaScript later on.

articles_fts.rank, articles.title, articles.url, articles.author, articles.yearThese are the other fields we need back - most of them are from the articles table but we retrieve the rank (representing the strength of the search match) from the magical articles_fts table.

from articles

join articles_fts on articles.rowid = articles_fts.rowidarticles is the table containing our data. articles_fts is a magic SQLite virtual table which implements full-text search - we need to join against it to be able to query it.

where articles_fts match :search || "*"

order by rank limit 10;:search || "*" takes the ?search= argument from the page querystring and adds a * to the end of it, giving us the wildcard search that we want for autocomplete. We then match that against the articles_fts table using the match operator. Finally, we order by rank so that the best matching results are returned at the top - and limit to the first 10 results.

How do we turn this into an API? As before, the secret is to add the .json extension. Datasette actually supports multiple shapes of JSON - we’re going to use ?_shape=array to get back a plain array of objects:

JSON API call to search for articles matching SVG

The HTML version of that page shows the time taken to execute the SQL in the footer. Hitting refresh a few times, I get response times between 2 and 5ms - easily fast enough to power a responsive autocomplete feature.

A simple JavaScript autocomplete search interface

I considered building this using React or Svelte or another of the myriad of JavaScript framework options available today, but then I remembered that vanilla JavaScript in 2018 is a very productive environment all on its own.

We need a few small utility functions: first, a classic debounce function adapted from this one by David Walsh:

function debounce(func, wait, immediate) {

let timeout;

return function() {

let context = this, args = arguments;

let later = () => {

timeout = null;

if (!immediate) func.apply(context, args);

};

let callNow = immediate && !timeout;

clearTimeout(timeout);

timeout = setTimeout(later, wait);

if (callNow) func.apply(context, args);

};

};We’ll use this to only send fetch() requests a maximum of once every 100ms while the user is typing.

Since we’re rendering data that might include HTML tags (24 ways is a site about web development after all), we need an HTML escaping function. I’m amazed that browsers still don’t bundle a default one of these:

const htmlEscape = (s) => s.replace(

/>/g, '>'

).replace(

/</g, '<'

).replace(

/&/g, '&'

).replace(

/"/g, '"'

).replace(

/'/g, '''

);We need some HTML for the search form, and a div in which to render the results:

<h1>Autocomplete search</h1>

<form>

<p><input id="searchbox" type="search" placeholder="Search 24ways" style="width: 60%"></p>

</form>

<div id="results"></div>And now the autocomplete implementation itself, as a glorious, messy stream-of-consciousness of JavaScript:

// Embed the SQL query in a multi-line backtick string:

const sql = `select

snippet(articles_fts, -1, 'b4de2a49c8', '8c94a2ed4b', '...', 100) as snippet,

articles_fts.rank, articles.title, articles.url, articles.author, articles.year

from articles

join articles_fts on articles.rowid = articles_fts.rowid

where articles_fts match :search || "*"

order by rank limit 10`;

// Grab a reference to the <input type="search">

const searchbox = document.getElementById("searchbox");

// Used to avoid race-conditions:

let requestInFlight = null;

searchbox.onkeyup = debounce(() => {

const q = searchbox.value;

// Construct the API URL, using encodeURIComponent() for the parameters

const url = (

"https://search-24ways.herokuapp.com/24ways-866073b.json?sql=" +

encodeURIComponent(sql) +

`&search=${encodeURIComponent(q)}&_shape=array`

);

// Unique object used just for race-condition comparison

let currentRequest = {};

requestInFlight = currentRequest;

fetch(url).then(r => r.json()).then(d => {

if (requestInFlight !== currentRequest) {

// Avoid race conditions where a slow request returns

// after a faster one.

return;

}

let results = d.map(r => `

<div class="result">

<h3><a href="${r.url}">${htmlEscape(r.title)}</a></h3>

<p><small>${htmlEscape(r.author)} - ${r.year}</small></p>

<p>${highlight(r.snippet)}</p>

</div>

`).join("");

document.getElementById("results").innerHTML = results;

});

}, 100); // debounce every 100msThere’s just one more utility function, used to help construct the HTML results:

const highlight = (s) => htmlEscape(s).replace(

/b4de2a49c8/g, '<b>'

).replace(

/8c94a2ed4b/g, '</b>'

);This is what those unique strings passed to the snippet() function were for.

Avoiding race conditions in autocomplete

One trick in this code that you may not have seen before is the way race-conditions are handled. Any time you build an autocomplete feature, you have to consider the following case:

- User types

acces - Browser sends request A - querying documents matching

acces* - User continues to type

accessibility - Browser sends request B - querying documents matching

accessibility* - Request B returns. It was fast, because there are fewer documents matching the full term

- The results interface updates with the documents from request B, matching

accessibility* - Request A returns results (this was the slower of the two requests)

- The results interface updates with the documents from request A - results matching

access*

This is a terrible user experience: the user saw their desired results for a brief second, and then had them snatched away and replaced with those results from earlier on.

Thankfully there’s an easy way to avoid this. I set up a variable in the outer scope called requestInFlight, initially set to null.

Any time I start a new fetch() request, I create a new currentRequest = {} object and assign it to the outer requestInFlight as well.

When the fetch() completes, I use requestInFlight !== currentRequest to sanity check that the currentRequest object is strictly identical to the one that was in flight. If a new request has been triggered since we started the current request we can detect that and avoid updating the results.

It’s not a lot of code, really

And that’s the whole thing! The code is pretty ugly, but when the entire implementation clocks in at fewer than 70 lines of JavaScript, I honestly don’t think it matters. You’re welcome to refactor it as much you like.

How good is this search implementation? I’ve been building search engines for a long time using a wide variety of technologies and I’m happy to report that using SQLite in this way is genuinely a really solid option. It scales happily up to hundreds of MBs (or even GBs) of data, and the fact that it’s based on SQL makes it easy and flexible to work with.

A surprisingly large number of desktop and mobile applications you use every day implement their search feature on top of SQLite.

More importantly though, I hope that this demonstrates that using Datasette for an API means you can build relatively sophisticated API-backed applications with very little backend programming effort. If you’re working with a small-to-medium amount of data that changes infrequently, you may not need a more expensive database. Datasette-powered applications easily fit within the free tier of both Heroku and Zeit Now.

For more of my writing on Datasette, check out the datasette tag on my blog. And if you do build something fun with it, please let me know on Twitter.

About the author

Simon Willison is an engineering director at Eventbrite, a Bay Area ticketing company working to bring the world together through live experiences.

Simon joined Eventbrite through their acquisition of Lanyrd, a Y Combinator funded company he co-founded in 2010. He is a co-creator of the Django Web Framework, and has been blogging about web development and programming since 2002 at https://simonwillison.net/

Simon is the creator of Datasette, a new tool for publishing structured data as a web API. Datasette is based on his experiences working as a data journalist at the UK’s Guardian newspaper.